But between rent and other bills I have ‘very little’ in the bank each month.

‘I’m stretched thin.’ I’m 23 and make $75K a year living in the Northeast. What should I have done?Ĭompanies paying for abortion-related travel get more interest from job hunters. ‘I’ve suffered for a long time’: My mother demanded I return my inheritance so she could give it to my brother, who has a drug addiction. Trading in risky ‘0DTE’ stock options hits record and could spark a stock-market selloff, strategists say What can we do?Ĥ01(k) hardship withdrawals jumped 36% in the second quarter (Bloomberg) - Wall Street shook off worries over the Bank of Japan policy tweak as another round of US data bolstered bets on the so-called Goldilocks scenario of an economy that’s neither running. ‘My family is dealing with a significant shock’: My father secretly married his caregiver, who is 40 years his junior. Soup = BeautifulSoup(resp, from_encoding=().get_param('charset'))įor link in soup.find_all('a', href=True):įrom import SentimentIntensityAnalyzerĭf = df.apply(lambda x: sid.polarity_scores(x))ĭf = df.apply(lambda x:convert(x))ĭf_final = pd.I’m looking for a place that has year-round mild, sunny weather and is near or on the water, and my budget is $125,000 - where should I retire? Hopefully it helps you get going in the right direction. This sample code is from a project that I worked on a short time ago. WebDriver implementations that are W3C conformant also annotate the navigator object with a WebDriver property so that Denial of Service attacks can be mitigated. A general rule of thumb is that longer tests are more fragile and unreliable. Logging in to third-party sites using WebDriver at any point of your test increases the risk of your test failing because it makes your test longer. The API is also unlikely to change, whereas webpages and HTML locators change often and require you to update your test framework. Although using an API might seem like a bit of extra hard work, you will be paid back in speed, reliability, and stability. The ideal practice is to use the APIs that email providers offer, or in the case of Facebook the developer tools service which exposes an API for creating test accounts, friends, and so forth. Aside from being against the usage terms for these sites (where you risk having the account shut down), it is slow and unreliable.

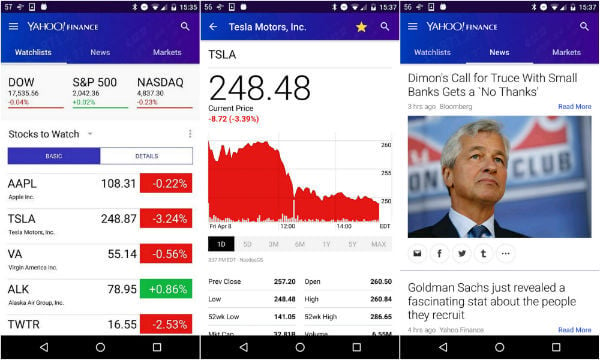

This is not best automation practice in seleniumįor multiple reasons, logging into sites like Gmail and Facebook using WebDriver is not recommended. Soup = BeautifulSoup(driver.page_source, "html.parser") New_height = driver.execute_script("return ") Last_height = driver.execute_script("return ")ĭriver.execute_script("window.scrollTo(0, ) ") Screen_height = driver.execute_script("return ") # get the screen height of the web Time.sleep(2) # Allow 2 seconds for the web page to open # Web scrapper for infinite scrolling pageĭriver = webdriver.Chrome(ChromeDriverManager().install()) import timeįrom webdriver_manager.chrome import ChromeDriverManager I've been searching everywhere for help but it seems like because the news results are loaded incrementally each time you scroll down, it complicates things much more. I'm trying to scrape and scroll infinitely till it stops however, I'm having trouble trying to scrape past the first scroll. So i'm working on a little project where i scrape yahoo finance news on a specific company and do some data analysis on it to see how news sentiment affects stock performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed